Beyond Text: The Rise of the "Robotics Factory"

For the last two years, the technology world has been obsessed with disembodied minds. We have marveled at chatbots that can write poetry, debug code, and pass the Bar Exam. But while the digital world was busy generating text, a quieter, heavier revolution was building in the hardware labs. We are finally moving beyond text. We are entering the era of "Physical Intelligence."

The "Robotics Factory" is not just a building where robots are assembled. It is a new paradigm of software development where we don't code movements; we teach them. Companies like Figure, Tesla, and Agility Robotics are not just building machines; they are building data pipelines that translate pixels into motor torque.

I have spent the last decade tracking industrial automation, from the rigid arms of the automotive floor to the chaotic shelves of e-commerce fulfillment. The shift I am seeing today is unlike anything since the introduction of the PLC (Programmable Logic Controller). We are stopping the "programming" of robots and starting the "training" of them. But as anyone who has actually tried to deploy these systems knows, reality is a lot messier than a simulation.

Defining the Robotics Factory: It's a Data Problem

To understand why this is trending now, you have to look at the convergence of three technologies: high-density batteries, powerful edge compute, and Vision-Language-Action (VLA) models.

In the old world (let's call it Robotics 1.0), if you wanted a robot to pick up a cup, you had to mathematically define the cup's coordinates, the arm's trajectory, and the grip force. If the cup moved two inches to the left, the robot would grab thin air, or worse, crush the table. It was brittle.

The new "Robotics Factory" approach treats physical movement like a language translation problem. Instead of translating English to French, the model translates Visual Input (Camera pixels) to Action Output (Joint movement). The "Factory" is the simulation environment—often running on NVIDIA's Isaac Sim or similar platforms—where these robots practice tasks millions of times in a virtual world before they ever touch a real object.

Real-World Friction: The "Sim-to-Real" Gap

This sounds like magic, but in practice, the transition from a digital training ground to a physical warehouse is violent. We call this the "Sim-to-Real" gap, and it is where 90% of pilot programs go to die.

In real workflows, teams notice that light is the enemy. I recently observed a deployment of autonomous mobile robots (AMRs) that worked perfectly in the morning. But at 4:00 PM, the sun hit the warehouse skylights at a specific angle, creating a high-contrast shadow on the concrete floor. The robots’ vision systems interpreted this shadow as a physical trench—a drop-off. For two hours every day, the entire fleet would grind to a halt, stranded by a trick of the light. No amount of coding fixed this; the model had to be re-trained to understand "shadows are safe."

One issue that keeps coming up is the "Squishy Object" problem. In a simulation, a box is a rigid cube. In reality, a cardboard box that has been sitting in humid Florida air for three weeks is soft. When a robot arm grips it with the standard force, the box deforms. The vacuum seal breaks. The payload drops. I’ve watched million-dollar robotic cells fail simply because the packaging tape on a box was slightly glossier than the training data expected, confusing the depth sensors.

The Technology: How VLA Models Work

So, what is actually powering these new machines? It isn't just a faster processor. It is a new architecture often called the Vision-Language-Action (VLA) model.

Think of it as a ChatGPT that has hands. In a traditional setup, the vision system (eyes) and the control system (hands) were separate. The eyes said "Cup is at X,Y,Z" and the hands calculated how to get there. In a VLA model, it is one continuous neural network. The image goes in, and the motor commands come out. There is no intermediate "math" that humans can read.

This allows for "semantic" commands. You can tell a modern robot, "Clean up that spill," and it understands:

- Identify the liquid (Visual).

- Identify a tool—sponge or towel (Context).

- Plan a wiping motion (Action).

- Stop when the surface looks dry (Feedback).

This "End-to-End" control is what allows companies like Figure to demo robots that can make coffee simply by watching a human do it once.

Where This Tool Breaks Down (The Latency Trap)

While the demos are impressive, we need to talk about the bottleneck: Inference Latency.

In a text chat, if the bot takes 2 seconds to answer, it’s annoying. In robotics, if the bot takes 2 seconds to decide how to balance, it falls over. The computation required to process high-resolution video and generate motor controls is massive.

This sounds efficient in theory—cloud robotics!—but in practice, you cannot run a bipedal robot on the cloud. If the Wi-Fi jitters, the robot trips. This means these machines need incredibly powerful computers on board (in their chests or heads). This drives up the cost and drains the battery. A major breakdown point I see in industrial pilots is battery life. A humanoid robot carrying a supercomputer might only last 2–4 hours. In a 24/7 fulfillment center, that downtime destroys the ROI.

Case Study: The Agility Robotics "Digit" Deployment

Let's look at a concrete example of this in the wild: Amazon's testing of Agility Robotics' "Digit."

- The Mission: Move empty yellow totes (bins) from a conveyor belt to a shelf.

- The Reality: It’s boring. And that’s the point. Digit isn't doing backflips. It is walking on legs rather than wheels.

- Why Legs? Because existing warehouses are built for humans. They have stairs, curbs, and uneven transitions. Wheels get stuck; legs step over.

- The Result: The pilot showed that while Digit could do the work, the integration was the hard part. It wasn't about the robot picking up the tote; it was about the robot navigating a space crowded with erratic humans without freezing in a "safety stop" every 30 seconds.

Comparison: Traditional Automation vs. The New "Robotics Factory"

It is crucial to distinguish between the robot arms we have had for 40 years and this new wave.

| Feature | Traditional Automation (Robotics 1.0) | New "Robotics Factory" (Embodied AI) |

|---|---|---|

| Programming | Hard-coded (If X, move to Y) | Learned (Watch and imitate) |

| Environment | Strictly controlled (Cages, flat floors) | Unstructured (Messy homes, stairs) |

| Adaptability | Zero (Change the part, break the code) | High (Generalizes to new objects) |

| Cost of Deployment | High upfront engineering hours | High upfront hardware cost, lower coding |

| Safety Mode | Physical cage / Light curtains | Semantic understanding / Force sensing |

Who Should NOT Use This Tool

The hype cycle is peaking, but you should not be buying a general-purpose humanoid robot in 2025/2026 if:

- You need high-speed throughput: A specialized "gantry" robot or a conveyor system moves goods 10x faster than a bipedal robot ever will. If your product is uniform (e.g., bottling Coke), humanoids are a waste of money.

- You have a "High-Mix, Low-Volume" shop: If you make 50 different custom parts a day, the training time for the robot to recognize each new part will eclipse the time it takes a human machinist to just do it.

- You operate in extreme environments: Dust, extreme heat, or high magnetic fields wreak havoc on the delicate sensors of these new machines. Old-school hydraulic robots are still the kings of the foundry.

The "Jack of All Trades" Fallacy

What the current viral marketing gets wrong is the idea of the "General Purpose" robot. The narrative is: "Humans can do everything, so a robot shaped like a human should do everything."

But biology is a compromise. We have legs because we evolved from tree-climbers, not because legs are the most efficient way to move on a flat concrete floor. Wheels are better for floors. Tracks are better for mud. Suction cups are better for lifting boxes than fingers.

The "Robotics Factory" will likely not result in a world of C-3PO clones. It will result in specialized form factors driven by general intelligence. A forklift that can "see" and "reason" is infinitely more valuable to a warehouse than a robot that walks on two legs just to look cool.

FAQ: Common Questions on the Robotic Shift

Will these robots replace human workers?

In the short term, no. They are replacing "tasks," not "jobs." They are taking over the repetitive, injury-prone motion of lifting 40lb boxes. However, in the long term, labor markets will absolutely shift. The role of "warehouse picker" will evolve into "fleet manager."

How expensive are these systems?

A collaborative robot (Cobot) arm costs around $25,000–$40,000. A full humanoid like the Unitree H1 or Tesla Optimus (projected) falls in the $20,000–$100,000 range, but the maintenance contracts and software subscriptions can double that annually.

Is the software open source?

There is a growing open-source movement (see: ROS 2 - Robot Operating System), but the high-end "brains" (the VLA models) are currently proprietary moats held by companies like Google (DeepMind), OpenAI, and specialized startups.

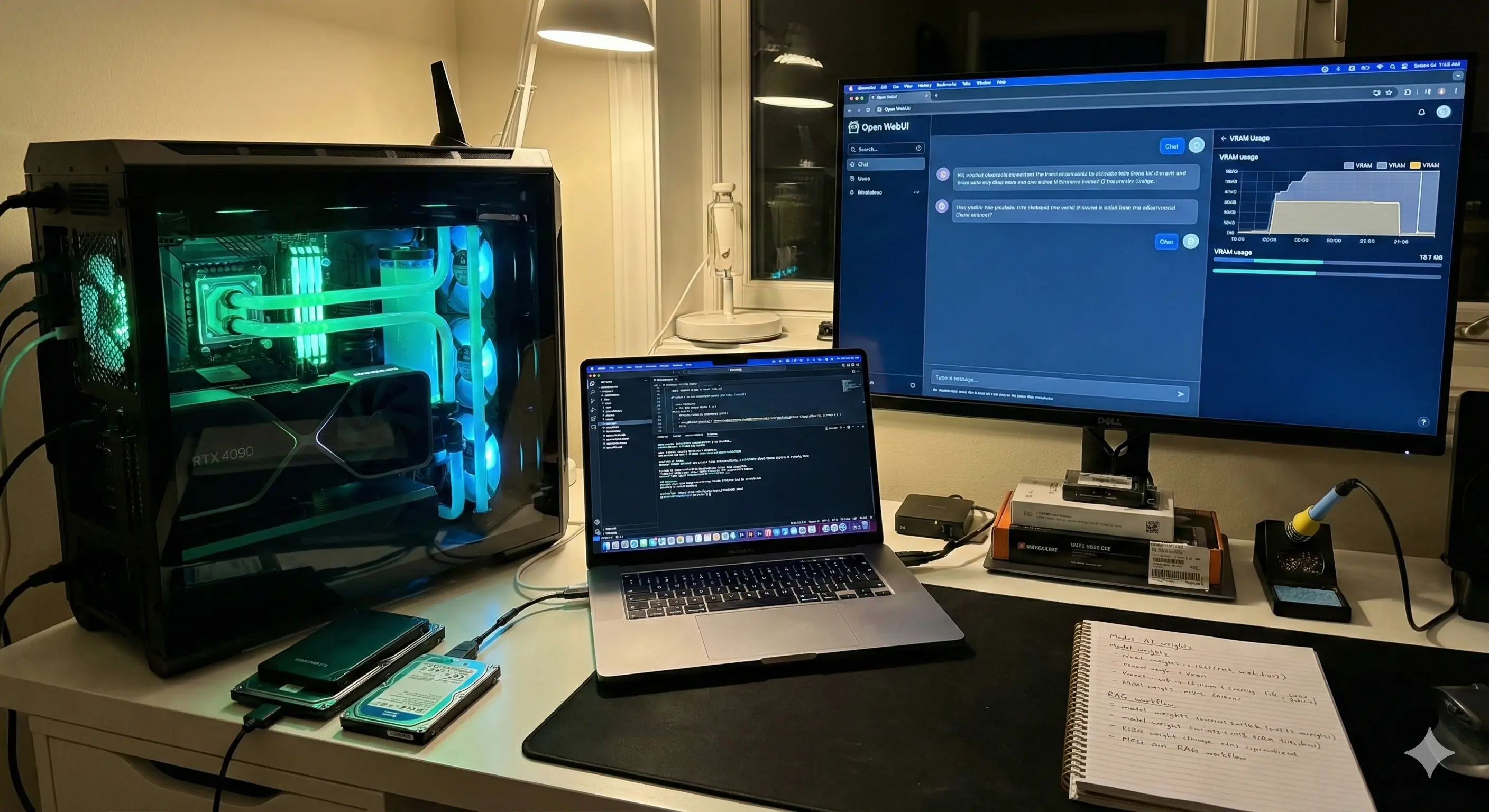

Can I build this at home?

Surprisingly, yes. The "Aloha" project from Stanford is a low-cost, open-source hardware kit that lets hobbyists build a teleoperated robot arm for under $5,000 to collect data and train their own mini-models.

The Takeaway

The "Robotics Factory" is open for business, but the product isn't finished. We are in the "brick phone" era of physical intelligence. The hardware is clunky, the battery life is short, and the signal drops.

But the direction of travel is clear. We are no longer writing scripts for machines; we are raising them. For businesses, the move today isn't to buy a robot. It is to audit your physical workflows. Identify the tasks that require judgment, not just movement. That is where the robots are coming, and this time, they will have the eyes to see the mess they are cleaning up.

About the Author: Albert is a tech analyst and industrial automation strategist. He covers the supply chain of physical intelligence, from silicon to servos, helping enterprises navigate the transition to embodied computing.

Disclaimer: This article is for informational purposes only. It does not constitute investment or technical advice. Adoption of industrial robotics requires professional safety assessments and compliance with local labor and machinery regulations.