Case Study: How Tesla Turned Its Cars Into The World's Largest Data Collection Engine

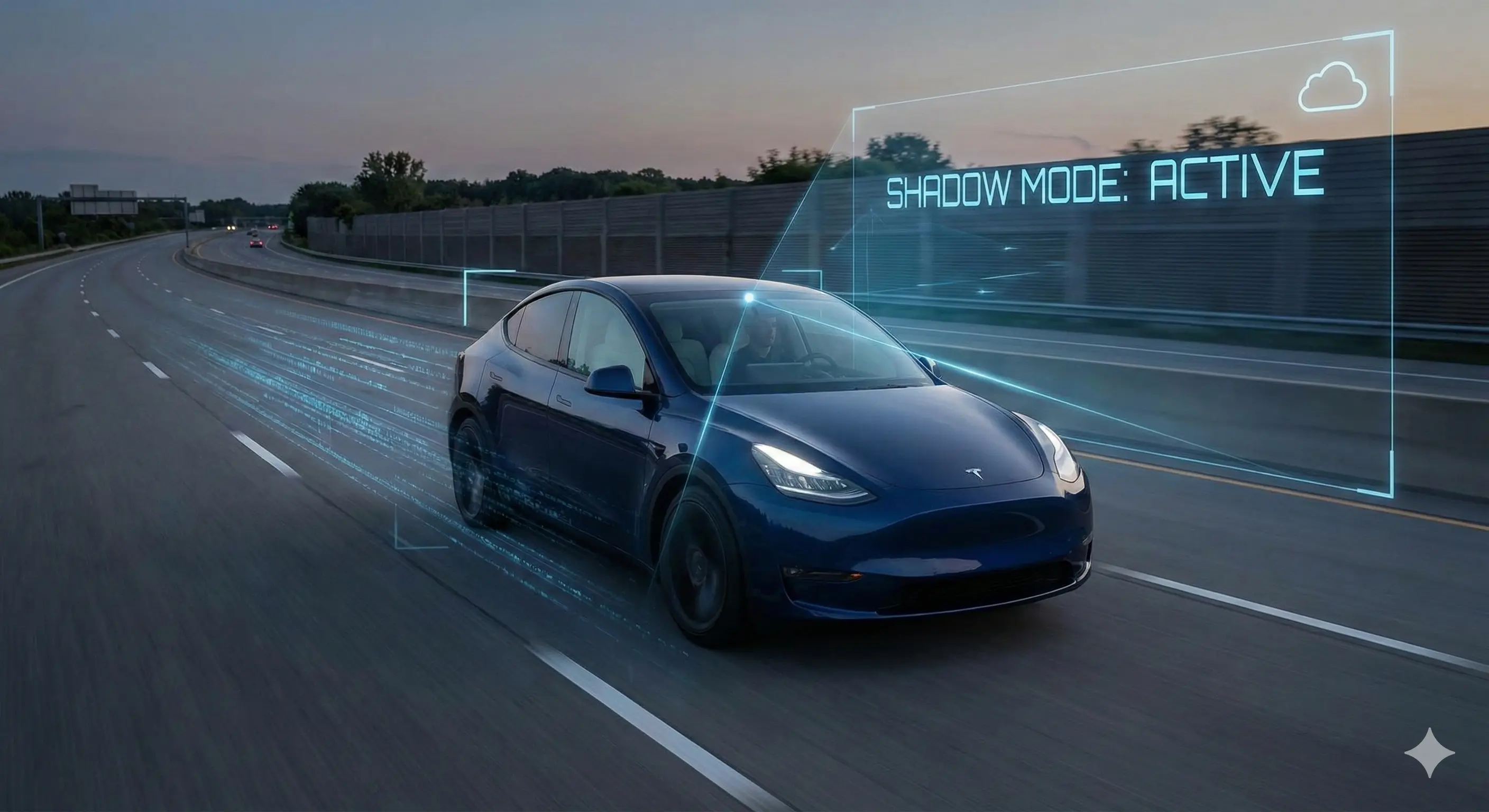

When you look at a Tesla Model Y, you are looking at a very nice electric vehicle. But if you look at it through the eyes of a data engineer, you see something entirely different: a roaming, solar-powered sensor node that happens to carry passengers.

For the last decade, the automotive industry has been obsessed with horsepower and range. Meanwhile, Tesla quietly executed one of the most significant pivots in industrial history. They stopped treating cars as isolated hardware and started treating them as distributed network devices. This isn't just about software updates; it's about the "Data Engine"—a feedback loop that allows the fleet to learn from the collective experience of millions of drivers.

Most manufacturers test their systems with a few hundred specialized vehicles. Tesla tests with millions of customer-owned cars. In this case study, we are going to look under the hood of this strategy, dissect how "Shadow Mode" actually works, and candidly discuss where this vision-based approach hits a wall in the real world.

The Mechanics of the "Fleet Learning" Loop

To understand why Tesla's approach is different, you have to look at the sheer scale of the input. Traditional mapping companies drive streets manually with expensive rigs. Tesla outsources this work to you, the owner.

Every modern Tesla is equipped with eight external cameras providing 360-degree visibility at up to 250 meters of range. But here is the misconception: the car is not constantly streaming video back to the servers. If it did, the cellular data costs would bankrupt the company in a week.

In real workflows, teams notice that the bottleneck isn't capturing data; it's filtering it. The car uses onboard triggers to decide what is interesting enough to save. If you drive down a highway and nothing happens, that data is discarded. But if you suddenly jerk the steering wheel to avoid a pothole, or if the driver intervenes to disengage the autopilot, the system flags that 10-second clip.

This creates a curated dataset focused almost entirely on "edge cases"—the weird, messy, unpredictable parts of driving that are impossible to simulate in a lab.

Shadow Mode: The Silent Observer

The smartest piece of this architecture is something called "Shadow Mode." This is where the company deploys a new version of its autonomy software to your car, but without giving it control. The new software runs in the background, making decisions on steering and braking, while you (or the older, stable software) actually drive the car.

The system then compares the Shadow code's decisions against what the human driver actually did. If the Shadow code wanted to turn left, but the human went straight, the system marks a "disagreement."

One issue that keeps coming up in data engineering is validation. How do you know your update won't cause a regression? By running it in Shadow Mode across a billion miles, engineers can validate that the new code is safer than the old code before a single customer ever feels the difference. It effectively turns the customer fleet into a massive quality assurance team.

Where This Approach Breaks Down in Real Use

While the "Data Engine" is brilliant on a whiteboard, it is not without significant friction in the real world. The reliance on cameras (Vision) over LiDAR (Laser Radar) is the single most controversial decision in the industry, and it has consequences.

In practice, we see that camera-based systems struggle with what we call "phantom braking." This happens when the car's computer misinterprets a shadow under a bridge or a heat mirage on the road as a solid obstacle. Because the system is designed to be cautious, it slams on the brakes.

A LiDAR sensor would immediately know that a shadow has no mass. A camera, however, is guessing based on pixels. No matter how much data you collect, interpreting 2D images into 3D space involves a level of estimation that physics-based sensors don't have to deal with. The sheer volume of data helps reduce these errors, but it hasn't eliminated them.

Furthermore, the "cleaning" of this data is a nightmare. Collecting millions of clips is easy; labeling them correctly so the computer can learn from them is incredibly labor-intensive. Even with auto-labeling tools, the system can get confused by edge cases like a person wearing a stop-sign t-shirt or a billboard featuring a picture of a tunnel.

Comparison: Tesla Vision vs. The Industry

To see where this strategy fits, it helps to compare it against the other major players like Waymo (Google) or Cruise, who use a hardware-heavy approach.

| Feature | Tesla (Vision Only) | Waymo/Traditional (LiDAR + Maps) |

|---|---|---|

| Primary Sensor | Cameras (Passive Optical) | LiDAR (Active Laser Scanning) |

| Mapping Strategy | Crowdsourced, constantly updating | Pre-mapped, geofenced areas |

| Scalability | Global (anywhere a car is sold) | Limited (city by city rollout) |

| Cost per Vehicle | Low (Consumer hardware) | High (Enterprise hardware) |

| Weakness | Weather (heavy rain/fog), Optical illusions | Unmapped areas, Changes in road construction |

Who Should NOT Rely on This Tech

Despite the viral videos of cars driving themselves through city streets, there are specific groups who should view this technology with extreme skepticism.

If you are a driver who creates content or works while commuting, this tool is not for you. The system is a "Level 2" driver assist, meaning the driver is legally and technically responsible for every second of operation. It is not a "set it and forget it" chauffer.

Additionally, those living in areas with poor road markings, heavy snow accumulation, or non-standard traffic signals will find the system adds little value. The "Data Engine" works best where there are many Teslas driving (like California or Norway). If you live in a rural area where you are the only Tesla on the road, the fleet learning advantage is significantly weaker for your specific environment.

Case Study: The Roundabout Problem

A few years ago, the system struggled immensely with roundabouts. It was a classic software problem: the rules were hard to code explicitly.

Instead of writing new code to explain "how to drive in a roundabout," the team put out a request to the fleet. They triggered the cameras to upload clips specifically when drivers navigated roundabouts.

They collected hundreds of thousands of examples of successful human negotiation of these intersections. The software didn't learn the "rules" of the roundabout; it learned the visual pattern of "successful pathing." Within months, the performance in roundabouts improved drastically. This is the Data Engine in action—solving logic problems with volume rather than code.

Privacy and the Cost of Connectivity

You cannot talk about this level of data collection without addressing the privacy elephant in the room. While the company claims that data is anonymized and stripped of VINs (Vehicle Identification Numbers) before it hits the learning servers, the reality is complex.

This sounds efficient, but in practice, true anonymization of GPS traces is incredibly difficult. If a trip starts at your house and ends at your office every day, it doesn't take a genius to figure out who owns the data.

Furthermore, the internal cameras monitoring driver attention are a point of contention. While they are crucial for ensuring the driver is paying attention, they represent a surveillance device inside the private space of the cabin. Owners are effectively trading a slice of their privacy for the promise of better features down the road.

FAQ: Understanding the Fleet

Does the car record everything I say?

No. The data collection is primarily video and telemetry (speed, steering angle, braking). Audio inside the cabin is generally not part of the standard fleet learning upload, although voice commands are processed to improve the voice recognition systems.

How much data does my car use?

It varies, but a car can upload gigabytes of data per month if it is connected to Wi-Fi. The heavy lifting is done over Wi-Fi to avoid cellular charges, which is why owners are encouraged to connect their cars to their home networks.

Can I opt out of the data collection?

Yes, there are toggles in the software menu to turn off data sharing. However, doing so may limit your ability to use certain advanced features, and you effectively remove your vote from the "democracy" of the fleet learning process.

Is this safer than a human driver?

Statistically, on highways, the numbers look very good. According to Tesla's Vehicle Safety Report, autopilot-engaged driving records fewer accidents per million miles than the national average. However, in complex city driving, the jury is still out. The system never gets tired or drunk, but it can be confused by things a human would instantly understand.

Why doesn't every car company do this?

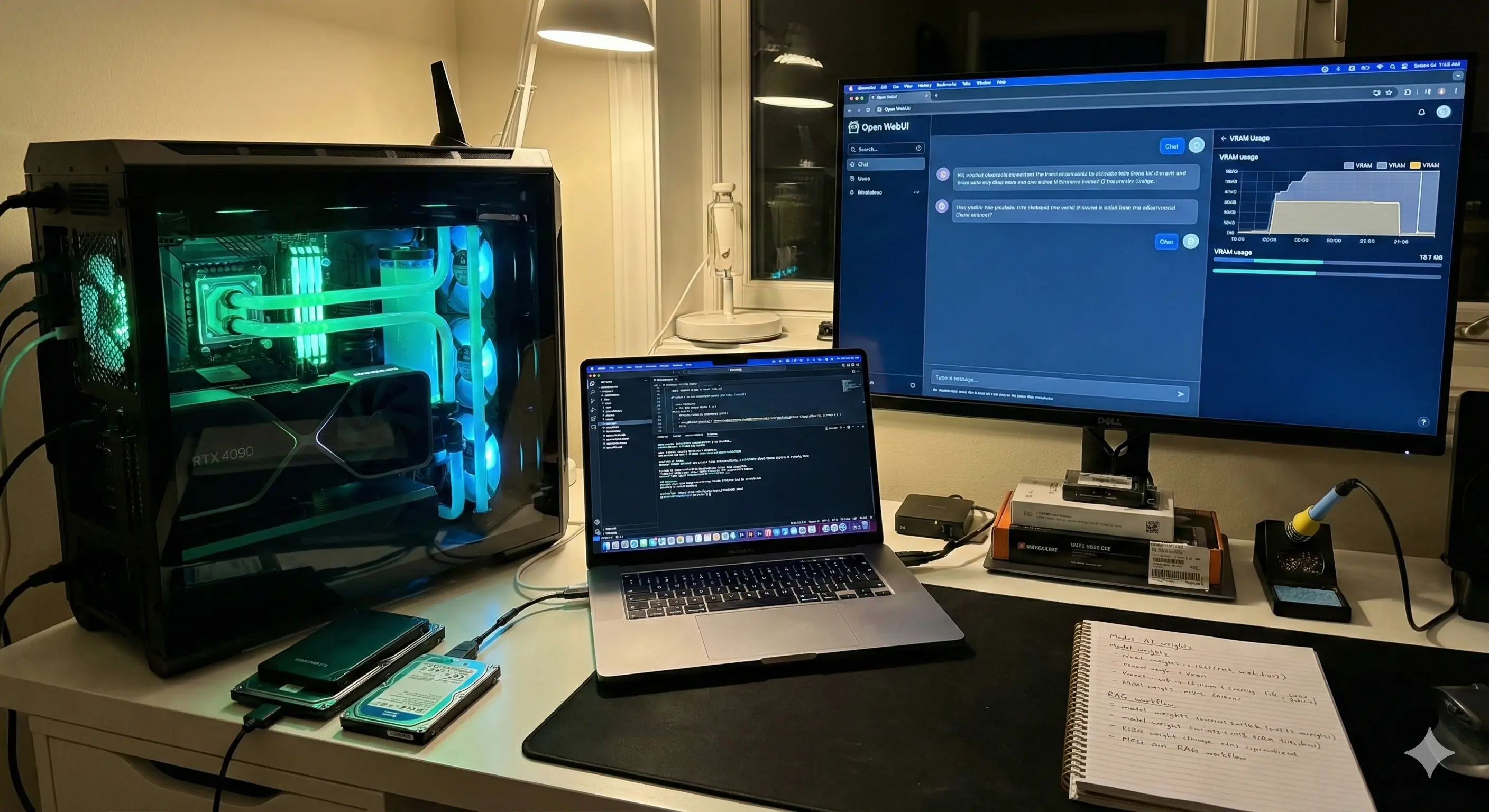

Legacy automakers have struggled to centralize their software. Most traditional cars rely on dozens of different computers from different suppliers that don't talk to each other. Tesla built their own integrated operating system from the ground up, which is the only reason this centralized data collection is possible.

The Bottom Line

Tesla has successfully convinced millions of customers to pay for the privilege of being beta testers. It is a brilliant business model that has created a defensive moat made of data.

While the promise of a car that drives itself while you sleep is still over the horizon, the mechanism building it is already here. The car in your driveway is learning from you every time you touch the brake. For the tech-savvy driver, this is an exciting participation in the future. For the privacy-conscious, it’s a reason to stick to vintage rides.

If you are interested in how software is eating the physical world, keep an eye on how other industries start adopting this "Shadow Mode" concept. The next time your car updates overnight, remember: you didn't just get a new feature; you likely helped build it.

Disclaimer: This article is for informational purposes only and does not constitute financial or legal advice. The automotive software landscape changes rapidly; always verify current specifications with the manufacturer.